I was recently converting a website to have a responsive design using Bootstrap. The content is served from an Azure SQL database and I needed to make a copy of the database so I could update the content in a test environment first.

There a couple of options on how to export a SQL database from Azure:

- Using combination of SQL Server Integration Services (to export the data) and DB creation scripts (to create the database objects).

- The Import/Export service in SQL Azure.

I opted to use the Export service in Azure to a create a BACPAC and restore the database in SQL Express on my dev machine.

Using the Export Service in Azure

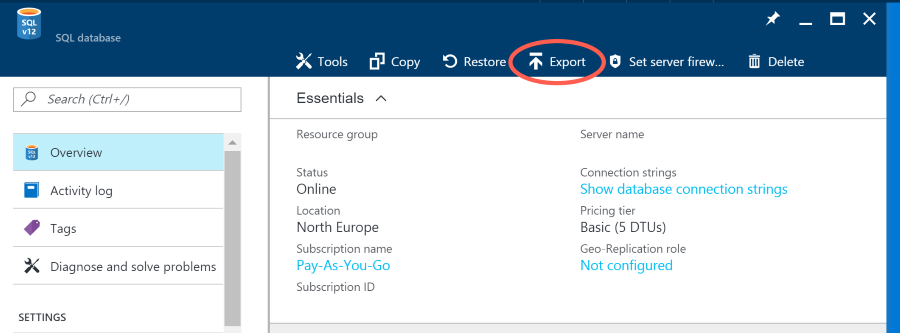

After logging into the Azure Portal, find the SQL database you want to export. Click on the Overview option, and then the Export option:

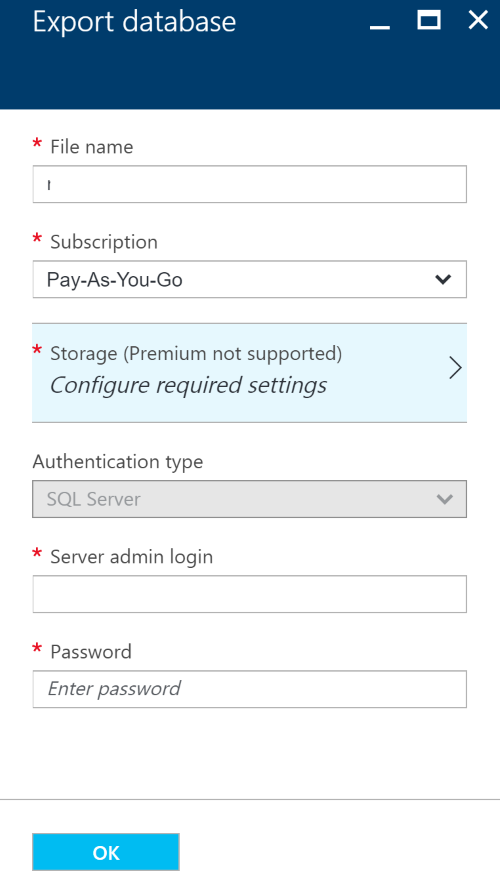

Next you need to specify where to save the export. For this you need an Azure Blob storage to save it to (make sure you save it to a private blob storage as you do not want a backup to be publically accessible). You will also need to enter the server admin login and password.

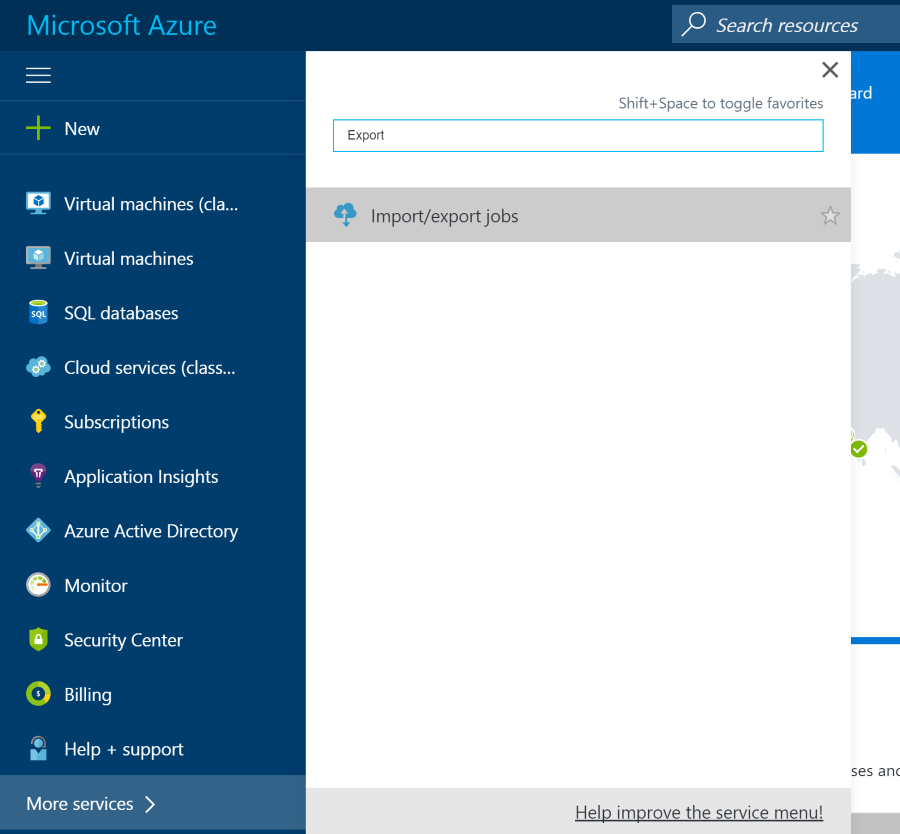

After clicking OK Azure will queue the export job. You can monitor the export from the Import/Export area of Azure. This can be found by searching for Export with Azure:

Note: When I first did this, the job said it had completed but the file turned out to 0 bytes in size. After waiting a couple of minutes I downloading the file again and it was fine the second time.

Downloading the BACPAC

When the Export job has completed, the BACPAC will be saved into the Blob storage location you specified. To access the Blob storage you can use the Azure Storage Explorer from Microsoft:

Import into SQL Express

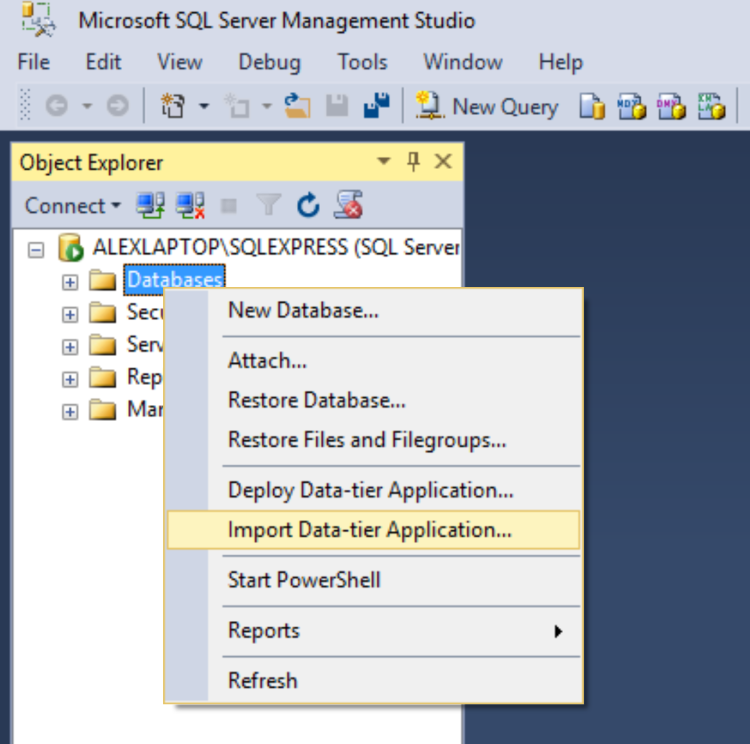

The next step is to use the SQL Server Management Studio to import the data using the Import Data-tier Application wizard. Right click ‘Databases’ in SQL Server Management Studio and select ‘Import Data-tier Application…':

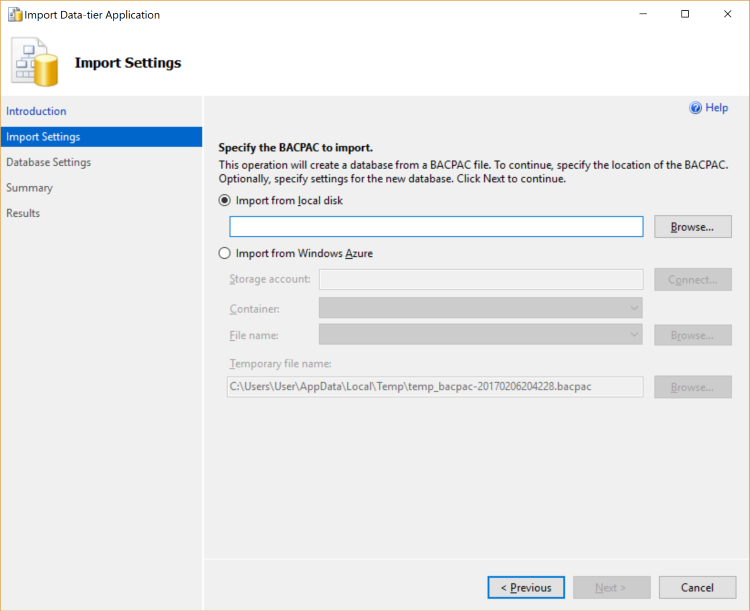

Then follow the steps in the wizard, first selecting the BACPAC that was downloaded from Azure:

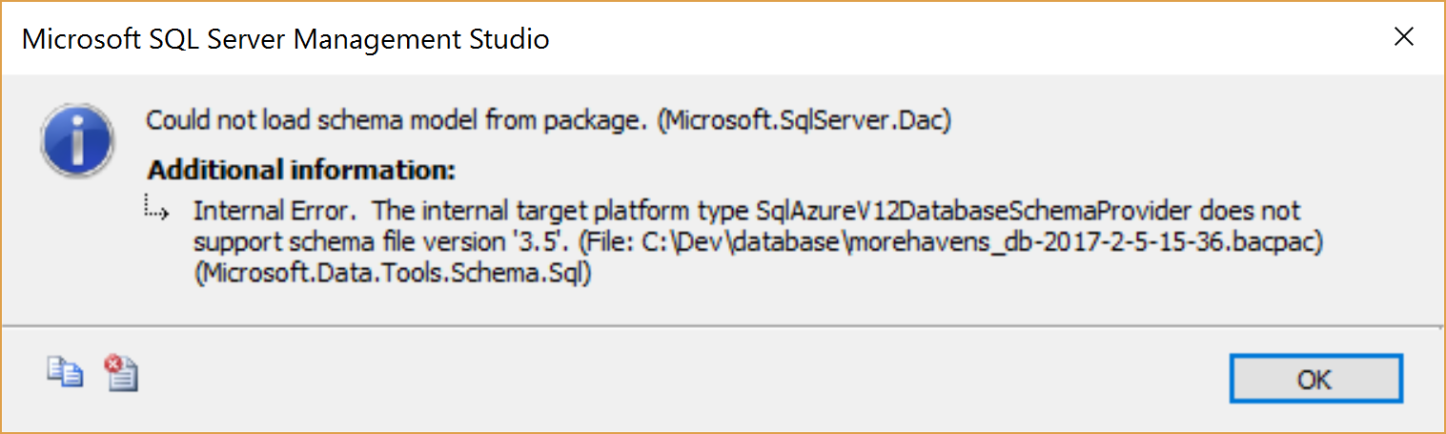

The first time I tried this I got the following error:

All I needed to do to remedy this was to update to the latest SQL Server Management Studio: https://docs.microsoft.com/en-us/sql/ssms/download-sql-server-management-studio-ssms

With the latest SQL Server Management Studio I successfully imported the BACPAC and had a copy of the database on my dev machine.

Some considerations from Microsoft

- For an archive to be transactionally consistent, you must ensure either that no write activity is occurring during the export, or that you are exporting from a transactionally consistent copy of your Azure SQL database.

- The maximum size of a BACPAC file archived to Azure Blob storage is 200 GB. To archive a larger BACPAC file to local storage, use the SqlPackage command-prompt utility. This utility ships with both Visual Studio and SQL Server. You can also download the latest version of SQL Server Data Tools to get this utility.

- Archiving to Azure premium storage by using a BACPAC file is not supported.

- If the export operation exceeds 20 hours, it may be canceled. To increase performance during export, you can:

- Temporarily increase your service level.

- Cease all read and write activity during the export.

- Use a clustered index with non-null values on all large tables. Without clustered indexes, an export may fail if it takes longer than 6-12 hours. This is because the export service needs to complete a table scan to try to export entire table. A good way to determine if your tables are optimized for export is to run DBCC SHOW_STATISTICS and make sure that the RANGE_HI_KEY is not null and its value has good distribution. For details, see DBCC SHOW_STATISTICS.